Key Takeaways

- The modern data stack in 2026 is no longer defined by which tools you use. It is defined by how well those tools are integrated, governed, and designed to support both human analysts and AI systems simultaneously.

- Modern data architecture has evolved from a collection of best-of-breed tools into a coordinated system where each layer (ingestion, storage, transformation, orchestration, governance, and activation) reinforces the reliability of every other layer.

- The modern data warehouse now serves a dual role: supporting structured analytics for human decision-makers and providing the clean, governed inputs that AI and LLM workflows depend on.

- Modern data stack tools deliver value when selected based on architectural fit, interoperability, and governance support. Not market popularity or feature lists.

- The organisations building the most reliable modern data platform architecture are not chasing every new technology. They are building governed, adaptable foundations that scale predictably.

Here is a situation we encounter more often than it should happen.

An enterprise team has invested in the right tools. Snowflake for warehousing. dbt for transformation. Fivetran for ingestion. Looker for analytics. The modern data stack, by every definition, is in place.

And yet the leadership team still cannot agree on a single revenue number. Two dashboards, built by two different teams on the same underlying data, return different figures. The data engineering team spends most of its time firefighting pipeline failures rather than improving the system. An AI initiative stalls because the data feeding it is inconsistent enough to produce unreliable outputs.

The tools were right. The architecture discipline was not.

This is the hard truth about the modern data stack in 2026: the technology is no longer the bottleneck. Most organisations can access the same tools. What separates the teams that extract genuine business value from the ones that keep rebuilding the same pipelines is how deliberately they have designed and governed the system those tools sit inside.

This guide covers what modern data architecture actually requires in 2026 — the layers that matter, where AI fits in, which tools to choose and why, and the implementation decisions that determine whether a modern data stack becomes a strategic asset or an expensive maintenance burden.

What is the Modern Data Stack in 2026?

A modern data stack is a cloud-native ecosystem designed to ingest, store, transform, govern, and activate data across analytics and AI workflows, with reliability and scale built in from the start.

What distinguishes it from earlier architectures is not just the tooling. Legacy systems were built around ETL (Extract, Transform, Load), a model where data was transformed before it entered storage, using rigid pipelines that required significant engineering effort to modify. This approach made sense when storage was expensive and computing power was limited. It stopped making sense when cloud infrastructure made both elastic and cheap.

Modern data architecture flips this model. ELT (Extract, Load, Transform) loads raw data into a centralised warehouse first and applies transformations after, using declarative, version-controlled workflows that are far easier to iterate on. This shift is not cosmetic. It changes how quickly teams can respond to new business requirements, how reliably they can trace data lineage, and how confidently they can feed AI systems with governed, consistent inputs.

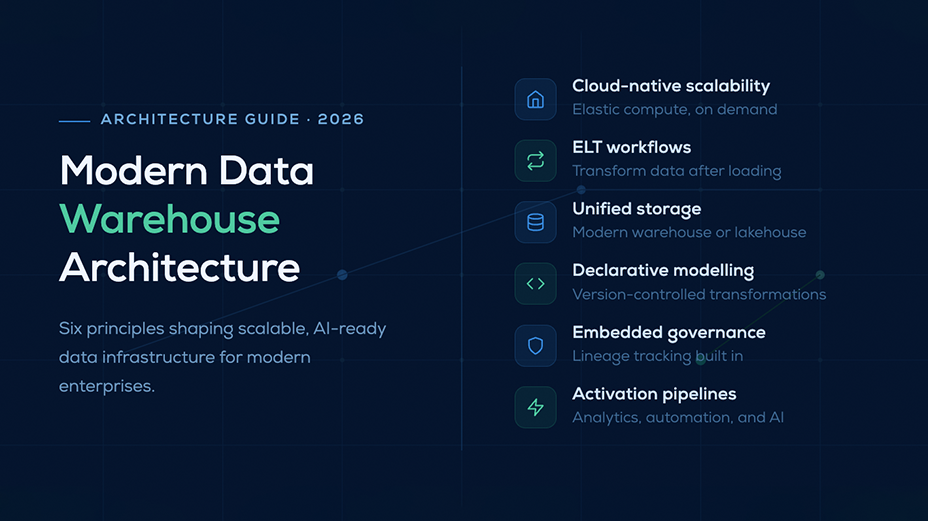

The core principles defining modern data warehouse architecture in 2026 are:

Cloud-native scalability with elastic compute— compute and storage scale independently, meaning organisations pay for what they use and can handle growth without redesigning their infrastructure.

ELT workflows that transform data after loading— raw data is preserved in its original form, transformations are version-controlled and repeatable, and changes can be made without disrupting upstream data sources.

Unified storage through a modern data warehouse or lakehouse— a single environment that supports structured, semi-structured, and increasingly vector data, eliminating the fragmentation that makes governance impossible.

Declarative modelling with version control— transformations are written as code, reviewed like code, and versioned like code. This creates a transparent audit trail of every business definition the organisation relies on.

Embedded governance and lineage tracking— data quality, compliance controls, and lineage visibility are designed into the architecture from day one, not added as an afterthought when something breaks.

Activation pipelines that serve both analytics and AI— the modern data stack does not end at the dashboard. Governed data flows into operational systems, AI applications, and reverse ELT workflows that embed intelligence directly into business processes.

What are the Essential Layers of Modern Data Stack Architecture

The strength of a modern data stack lies not in its individual components but in how each layer reinforces the reliability of every other layer. A well-designed modern data platform architecture is a coordinated system where data flows predictably from source to activation, with governance and observability embedded throughout.

Ingestion & Integration

The ingestion layer captures data from SaaS platforms, APIs, operational databases, and event streams. Its job is deceptively simple, that is to get data from where it lives to where it needs to go. But the engineering discipline required to do it reliably at scale is significant.

Modern ingestion pipelines are built for fault tolerance. When a source system changes its schema, the pipeline should handle it gracefully rather than fail silently. When a source goes offline, the pipeline should recover without data loss. Change data capture, which tracks row-level changes in operational databases in near real time, is now standard practice for teams that need their analytics to reflect what is happening in the business today, not yesterday.

The downstream consequences of weak ingestion are severe. Fragile pipelines produce inconsistent inputs. Inconsistent inputs produce unreliable analytics. Unreliable analytics erode trust; and once business stakeholders stop trusting the data, they stop using it.

Storage & Modern Data Warehouse Foundation

At the core of modern data architecture sits the modern data warehouse or lakehouse. It is a unified environment that supports structured, semi-structured, and vector data within a single governance boundary.

Cloud-native warehouses separate compute from storage, which solves two problems simultaneously. First, organisations can scale each dimension independently based on actual workload requirements, rather than provisioning fixed infrastructure for peak demand. Second, multiple compute clusters can query the same underlying data without contention, enabling different teams and workloads to operate concurrently without degrading each other’s performance.

The centralisation that the modern data warehouse enables is what makes governance possible. When data lives in one place with a single access control model, compliance is manageable. When it is fragmented across dozens of systems with inconsistent permission models, it is not.

Transformation Layer

The transformation layer is where raw data becomes the business-aligned models that analysts and AI systems actually use. It is also where the most consequential decisions about data semantics are made and where the most expensive mistakes tend to accumulate.

In a well-designed modern data architecture, transformations are written in SQL, stored in version control, peer-reviewed before deployment, and tested automatically to catch regressions. This sounds like standard engineering practice. In most data teams, it is not. Transformations are often written by analysts who were never trained as engineers, stored in notebooks or BI tools rather than source control, and modified without review. The result is a growing body of undocumented business logic that nobody fully understands.

The cost of this pattern compounds. When two teams define the same metric differently in their transformations (one including a particular revenue category, one excluding it), the resulting metric dispute is not a data problem. It is an architecture problem. The semantic layer exists to solve it.

Orchestration & Observability

Orchestration frameworks coordinate the execution of pipelines, ensuring that transformations run in the correct order, dependencies are respected, and failures are handled gracefully rather than silently corrupting downstream data.

Observability is what makes orchestration trustworthy. A pipeline that runs on schedule but produces stale, incorrect, or incomplete data is worse than a pipeline that fails loudly. Silent failures hide until something important breaks. Modern observability tools monitor freshness, schema drift, volume anomalies, and data quality metrics in real time, enabling engineering teams to detect and resolve issues before they affect the dashboards and AI systems that depend on clean data.

Semantic Layer & Metrics Governance

The semantic layer is the most underinvested component in most modern data stacks. It is also the one whose absence causes the most visible business pain.

It establishes shared, centralised definitions for the metrics and business entities that the organisation uses to make decisions: revenue, active users, conversion rate, churn. When these definitions live in the semantic layer, every dashboard, every report, and every AI query draws from the same source of truth. When they do not, and each analyst or team defines them independently in their own transformation code, the result is the scenario that opened this guide: two dashboards returning different numbers, and a leadership team that cannot agree on what the business is actually doing.

For AI workflows specifically, semantic clarity is not optional. Language models and AI agents amplify whatever inconsistencies exist in the data they consume. A governed semantic layer reduces the surface area for inconsistent outputs and makes AI applications more predictable and trustworthy at scale.

Activation & Consumption

Activation is the layer that transforms modern data architecture from an internal engineering asset into a business-facing capability. It delivers governed, business-ready data to the systems where decisions are made and actions are taken — dashboards, operational tools, AI applications, and reverse ELT pipelines that write insights back into the CRM, the product, or the support platform.

The organisations that treat activation as an afterthought are leaving most of the value on the table. They build sophisticated ingestion and transformation pipelines but leave analysts to manually export data into the tools the business actually uses. When the activation layer is designed thoughtfully, data intelligence becomes embedded in everyday workflows rather than accessible only to those who know how to write SQL.

The Role of AI & LLMs in the Modern Data Stack

AI is no longer an external consumer of data infrastructure. In 2026, it is a primary one, and it has fundamentally different requirements from human analysts.

Human analysts tolerate a degree of inconsistency. They know which metrics are reliable and which are not. They understand the historical quirks of the data. They bring context that compensates for ambiguity in ways that AI systems cannot.

AI amplifies inconsistency rather than tolerating it. An LLM querying a poorly governed modern data warehouse will generate confident-sounding answers based on conflicting definitions, stale data, and undetected schema drift. The output may look correct. It will not be reliable. At scale, unreliable AI outputs do not just cause individual errors. They embed systematic distortions into business decisions.

This has a clear implication for modern data platform architecture: AI readiness is not a feature to add later. It is a design requirement from day one. The teams that are building AI systems on top of well-governed, semantically consistent data stacks are able to deploy those systems with confidence. The teams building AI on top of fragile pipelines are discovering, repeatedly, that the data infrastructure is the limiting factor and not the model.

The key 2026 shifts shaping AI-ready data platform architecture are:

Semantic governance as a prerequisite for AI consistency: Shared metric definitions and centralised business logic create the stable inputs that AI systems require to behave predictably.

AI-assisted pipeline maintenance: Tooling now exists to detect anomalies, suggest remediation, and in some cases auto-heal data quality issues without human intervention. This reduces the operational burden on engineering teams while improving pipeline reliability.

Self-healing observability: Observability frameworks that automatically adapt to schema changes and volume fluctuations reduce the false positive rate that causes alert fatigue in manually configured systems.

Infrastructure convergence: The boundary between the analytics warehouse, the ML feature store, and the vector database is dissolving. Modern data stack tools increasingly support all three workloads within a single governance boundary, reducing the duplication and integration overhead that previously made AI infrastructure expensive to maintain.

What are the Best Modern Data Stack Tools in 2026

Selecting modern data stack tools based on interoperability, governance support, and architectural fit produces better outcomes than selecting based on feature lists or market momentum. The tool that performs best in a benchmark is not always the tool that performs best in a real production environment with real integration requirements.

| Layer | Leading Tools | Strengths | Considerations |

| Ingestion | Airbyte, Fivetran | Broad connectors, reliability, change data capture | Volume-based pricing escalates at scale |

| Transformation | dbt, Dataform | Declarative modelling, version control, lineage | SQL expertise required; governance discipline essential |

| Warehousing | Snowflake, BigQuery, Databricks | Elastic scale, AI readiness, unified governance | Cost management needed; architecture discipline determines ROI |

| Orchestration | Airflow, Prefect, Dagster | Workflow control, dependency management | Learning curve; operational complexity grows with pipeline count |

| Observability | Monte Carlo, Soda | Data quality monitoring, anomaly detection | Enterprise pricing; adoption discipline required |

| Analytics | Tableau, Looker, Power BI | Semantic modelling, visualisation | Ecosystem lock-in risk; governance varies by tool |

| Governance & Reverse ELT | Atlan, Census | Activation workflows, data cataloguing | Adoption discipline critical for value realisation |

One observation worth making from working across these tool ecosystems: the gaps between tools, where data moves from one layer to the next, are where most production issues originate. Vendor documentation describes each tool’s capabilities in isolation. Real-world architectures require them to work together reliably under conditions that the documentation does not anticipate. This is where engineering maturity becomes the differentiator between a modern data stack that works in a proof of concept and one that works in production, consistently, at scale.

Modern Data Stack vs Legacy Systems

The practical difference between modern ELT architecture and legacy ETL systems is not primarily about technology. It is about how quickly an organisation can respond to change.

| Aspect | Modern Data Stack | Legacy Systems |

| Architecture | Cloud-native, modular | Monolithic |

| Scalability | Elastic; compute and storage scale independently | Fixed capacity; scaling requires infrastructure investment |

| Processing | Real-time capable; data is available as soon as it is loaded | Batch-bound; insights are delayed by transformation cycles |

| Cost Model | Consumption-based; pay for what you use | High capital investment; pay for capacity whether used or not |

| Flexibility | Best-of-breed tools; replace components independently | Vendor lock-in; changing one component often requires changing many |

| AI Readiness | Designed for AI consumption from the start | Minimal; not built to support vector workloads or LLM pipelines |

The organisations that have adopted modern data warehouse architecture consistently report two changes that compound over time: faster time-to-insight, and improved cross-team trust in shared data. Both are downstream effects of centralisation and governance, not of any individual tool.

What are the Core Benefits of the Modern Data Stack?

A well-implemented modern data stack changes how organisations operate with data. The benefits listed below are not theoretical. Each one has a specific mechanism, and a specific failure mode when the architectural discipline that produces it is absent.

Faster time to insight: Cloud-native ELT pipelines load raw data immediately and apply transformations iteratively. A business question that previously required a data engineering ticket and a two-day wait can be answered in hours. The compounding effect is that teams begin asking more questions, because the cost of asking has dropped below the threshold of patience.

Elastic scalability without infrastructure strain. When compute and storage scale independently, a sudden spike in query volume does not degrade performance for everyone else on the platform. Organisations that manage seasonal demand patterns (retail, financial services, healthcare) benefit particularly from this architecture. Growth no longer requires a procurement cycle.

Consistent business semantics: Centralised modelling with a governed semantic layer means every team is working from the same metric definitions. This eliminates the metric disputes that consume executive time and undermine confidence in data. When the CFO and the Head of Product look at the same dashboard and see the same number, it is because the definition lived in one place, not because they happened to agree.

AI-ready data foundations: Governed, structured pipelines create predictable inputs for automation, analytics, and intelligent systems. This is increasingly the threshold question for enterprise AI initiatives: not whether the model is capable, but whether the data feeding it is trustworthy enough to produce reliable outputs at scale.

Reduced operational friction: Automated orchestration and observability reduce the proportion of engineering time spent on reactive maintenance. Teams that previously dedicated the majority of their capacity to firefighting fragile pipelines can redirect that attention toward improving the system. That is a compounding advantage over time.

Improved collaboration across teams: Analysts, engineers, and product teams operating on a shared data layer can iterate faster and align more easily. The absence of this shared layer is one of the most common root causes of the metric disputes and duplicated effort that slow data-driven organisations down.

Cost efficiency through modular tooling: Consumption-based architectures allow organisations to scale investment proportionally with usage. This is a meaningful advantage over fixed-infrastructure legacy systems, particularly for organisations with variable workloads.

Challenges & Risks in Adopting a Modern Data Stack

The modern data stack is not a shortcut to data maturity. Organisations that treat it as one consistently encounter the same set of compounding problems.

Fragmentation without architectural ownership: Best-of-breed stacks become fragmented when nobody owns the overall architecture. Individual teams adopt tools that work well in isolation but integrate poorly at the boundaries. The result is a modern data stack in name but a legacy architecture in practice, with all the maintenance burden of the old system and none of the governance benefits of the new one.

Pipeline expansion outpacing governance: As organisations build more pipelines, the surface area for quality failures grows. Without lineage tracking, quality enforcement, and compliance controls that scale with the pipeline count, rapid expansion creates technical debt that eventually becomes unmanageable. This is the point at which organisations discover that their modern data platform architecture was never actually governed. It was just fast.

Conflicting metric definitions at scale: Without centralised modelling and a governed semantic layer, different teams build different definitions of the same business concepts into their own transformation code. The inconsistency is invisible until it produces contradictory outputs. At that point, the trust damage is already done. Rebuilding stakeholder confidence in data after a significant metric incident is a multi-month effort.

Underestimated engineering discipline: Modern orchestration and transformation workflows require the same engineering discipline as production software: code review, testing, version control, documentation. Many data teams, built primarily from analysts, do not have these practices established. Tooling alone does not install them.

Consumption-based cost escalation: Cloud-native pricing models scale with usage. Without active monitoring of query patterns and compute consumption, costs can escalate significantly as adoption grows. This is particularly common in the first 12 months after a migration, when query volumes increase rapidly as more stakeholders gain access to data that was previously inaccessible.

Custom engineering beyond vendor defaults: Modern data stack tools handle the common cases well. Edge cases (custom connectors, complex transformation logic, non-standard integration patterns) require engineering effort that vendor documentation underrepresents. Organisations that scope migrations based on vendor demos often discover this gap at an inconvenient moment in the implementation.

These are not architectural flaws. They are predictable consequences of treating tooling as a proxy for maturity. A modern data stack built on disciplined implementation practices avoids most of them. One built on the assumption that the tools will handle it does not.

The Future of the Modern Data Stack Beyond 2026

The direction of the modern data stack is toward convergence: fewer boundaries between analytics, ML, and AI infrastructure, governed by design rather than retrofitted with controls after the fact.

One pattern that captures this shift well. An enterprise client we worked with had invested appropriately in modern data stack tools across every layer: Snowflake, dbt, Fivetran. The architecture diagram looked right. The reality was inconsistent metrics, fragile pipelines, and an AI initiative that could not get off the ground because the data quality was insufficient to produce reliable model outputs.

The problem was not the tools. It was that the transformation layer had been built without version control or peer review. The semantic layer had never been implemented; each team defined its own metrics independently. Observability was configured for infrastructure monitoring but not for data quality. The architecture existed on paper but had not been built with the discipline that makes modern data architecture work in practice.

After a structured assessment across ingestion, transformation, governance, and activation, we streamlined the ELT workflows, introduced version-controlled transformation practices, built a governed semantic layer that centralised the organisation’s metric definitions, and strengthened observability to detect data quality issues before they reached downstream systems. The result was improved reliability, consistent metrics across teams, and a data foundation stable enough to support the AI initiative that had previously stalled.

The organisations succeeding with modern data warehouse architecture in 2026 are not the ones with the most sophisticated tools. They are the ones that have built the discipline to use those tools correctly, and the maturity to know that the architecture diagram is where the work begins, not where it ends.

FAQs

Not exactly. A modern analytics stack is focused on reporting, dashboards, and business intelligence. It is concerned with how data is visualised and consumed by human analysts. A modern data stack is broader infrastructure: it covers ingestion, storage, transformation, governance, and activation, with analytics as one output among several.

The distinction matters increasingly as organisations build AI and operational workflows on top of their data infrastructure. A modern data stack designed only for analytics will not reliably support LLM pipelines, ML training workflows, or reverse ELT use cases. The architecture needs to be designed for the full range of consumers from the start.

The principles that produce reliable outcomes are: centralise governance before you scale, version-control your transformations, define your metrics in one place, and monitor data quality rather than just pipeline execution. Organisations that apply these principles consistently end up with data infrastructure that improves over time. Organisations that skip them end up with infrastructure that requires constant intervention to keep running.

A modern data stack architecture diagram shows how data moves through a governed lifecycle, from ingestion through storage, transformation, orchestration, semantic modelling, and activation. Its value is not in the diagram itself but in the shared understanding it creates. When engineering, product, and analytics teams all work from the same architectural model, conversations about what to build next and how to resolve production issues become significantly more productive.

AI is changing the requirements for data infrastructure in two ways simultaneously. First, it is a new class of data consumer with stricter requirements for consistency, governance, and semantic clarity than human analysts have. Second, it is increasingly being used to improve the data stack itself, through AI-assisted pipeline maintenance, anomaly detection, and self-healing observability. The organisations designing their modern data platform architecture with AI as a primary consumer from the start are building a foundation that will serve both use cases. Those treating AI readiness as a future upgrade are accumulating a debt that will be expensive to repay.

Start with governance support, not features. A tool that produces clean, traceable, consistently defined data within a well-governed architecture delivers more business value than a more capable tool that requires significant custom engineering to integrate reliably. The question to ask of any tool is not what it can do, but how it behaves at the boundaries: when data moves in, when schemas change, when something fails. Those are the moments that determine whether a modern data stack is reliable in production or merely impressive in a proof of concept.

The most reliable signal is metric disagreement. When two teams produce different numbers from the same underlying data, the root cause is almost always a governance failure in the transformation or semantic layer. Other signals include engineering teams spending more time on maintenance than on improvement, AI initiatives stalling because data quality is insufficient, and a growing backlog of data requests that the team cannot service within a timeframe that the business finds acceptable. These are not tooling problems. They are architecture problems, and modern data architecture exists to solve them.